How Major Reasoning Models Converge to the Same “Brain” as They Model Reality Increasingly Better

I be one of the most interesting discoveries (and topics) in artificial intelligence, leaving aside the debate about whether this is intelligence at all or not.

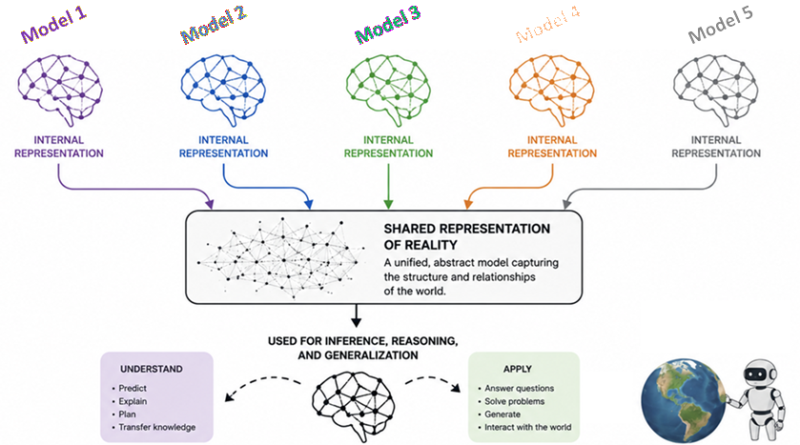

We (I at least!) assume that if you trained one AI model purely on, say, images and another purely on text, they would develop entirely different ways of “thinking” — not entering the discussion about what this exactly means. Our notion would be that they use completely different architectures and process completely different data, so they should, by all logic, have completely different “brains”, even if they both are good at their tasks with images and text.

But according to some exciting research from various groups, that isn’t the case at all!

Already in 2024, MIT presented solid evidence that every major AI model is secretly converging to the same “thinking core” (or brain, or whatever you want to call it). As these models get bigger and more powerful, they are all arriving at the exact same conclusion about how the world is structured. Maybe this wasn’t obvious with the early models, because they were bad at reasoning; but it becomes more and more evident as they get better. And allegedly, I would say, the reason for that is that if they are all correct then they MUST be creating a very similar representation of reality.

The allegory of the (AI) cave

To understand why this is happening, some researchers looked back 2,400 years to Plato’s “Allegory of the Cave” — resulting in some interesting preprint titles containing ideas such as the “Platonic Representation Hypothesis”. Essentially, Plato believed that we humans are like prisoners in a cave, watching shadows flicker on a wall. We think the shadows (our perceptions) are “reality”, but they are actually just projections of a deeper, hidden, more complex reality existing outside the cave.

One of the many papers I read to prepare this (links at the end) argues that AI models are doing the exact same thing, and that in doing so they converge to the same model of how the world works in order to understand the input shadows.

The billions of lines of text, the trillions of pixels in images, the endless audio files used for training of our modern AI models are just their perception (“shadows”) of our world. These powerful models are looking at these different shadows of human data and, completely independently, they are discovering the exact same underlying structure of the universe to make sense of it.

Different eyes, same vision

Here is the mind-blowing part, to me at least: A model that only “sees” images and a model that only “reads” text are measuring the distance between concepts in the exact same way (if they are both good enough).

If you ask a vision model to map the “distance” between a picture of a “dog” and a “wolf”, and then ask a language model to map the distance between the word “dog” and the word “wolf”, the mathematical structures they build are becoming more and more similar as they can better distinguish the two animals.

In other words, it seems like as these models scale up and become better, they stop being a mess of random connections. Research shows that they tend to align, and in particular that as vision models and language models get larger, the way they represent data becomes more and more alike. So amazing, don’t you think!

Why scale changes everything

According to the research available, this all seems to be quite universal and happening with modern models from all companies and trained with different sources, as long as the model itself is capable enough. In fact, as a model gets larger, whatever it is, it undergoes a “phase change” in their internal thinking. Research seems to indicate that these models stop simply memorizing their specific tasks and rather start to build up a statistical model of reality itself.

And apparently, this happens because of some “selective pressure” acting on the models:

- Task generality: If you want an AI to be good at everything, there is only one “best” way to represent the world such that it doesn’t overfit yet can be predicted. Since there’s only ONE best way, they must all get to it!

- Capacity: Large models have the “room” to find the most elegant, simple solution. But having ample room in terms of architecture of number of parameters must be balanced with avoiding overfitting.

- Simplicity bias: Deep networks actually prefer simple solutions over complex ones, again especially if overfitting is avoided.

One important thing is that the different AI models might adapt to these pieces of selective pressure at different speeds (or with different levels of efficiency); but they are certainly all heading toward the same final state of maximal understanding achieved through the same internal representation of how the world works.

The most modern research on “knowledge mechanisms”

If I were 25 years younger and had to chose a career now, I would probably chose something like computer sciences mixed with psychology. Because to me, here’s where the most exciting part of the AI world is. Read why!

A recent survey on “knowledge mechanisms” in LLMs adds another layer to all this described above. It suggests that knowledge in these models isn’t just scattered randomly; rather, it evolves from simple memorization to complex “comprehension and application”. There would then be some kind of “dynamic intelligence” at play. The trend that knowledge and capability tend to converge into the same representation spaces seems to happen across the entire artificial neural model group, regardless of data, modality, or objective. Even knowledge that we humans haven’t quite grasped yet (or that only domain-specific experts grasp, say, how to build up music for a composer or why and how photons can entangle for a physicist) is being mapped by these models as they find patterns (following the example, say in music or quantum physics) that our biological brains can’t process as quickly.

Why this is so cool, and an analogy with how we humans learn

This is one of those rare moments where math, computer science, and philosophy collide. It seems to me like the models are building a unified perception of reality in the only way they can ingest it: as words, images, and sounds. Not really that different to how babies learn, perhaps though in a different order and adding more inputs including physical ones (tough, bumps, etc.), and of course coupled to physical outputs (crying, laughing, moving limbs, walking, …)

Inside our brains, after all, multimodality is also all integrated and works under the same global understanding (which, yes, can be tricked with illusions, but that’s for another day!). In other words, our brains also map everything to a single reality, which is our personal interpretation of how the world works. If I take a photo of an apple, if I write the word “apple”, if I record the sound of someone biting an apple, those are three different “shadows” but they are all projected from the same actual, physical reality of the apple. Even if the apple is one color of the other, even artificially painted, or if I write the word in other languages, “manzana” or “pomme” will, if I speak Spanish and French respectively, reflect kind of the same shadow.

Global representation of reality + physical inputs + physical outputs –> robots

The whole thing above, including the analogy with babies and humans, can then be extrapolated more and more. Or at least I like to think that.

Add capabilities for physical inputs and outputs to a global, well-working AI model of reality, and we have a robot that can learn to interpret and interact with the world. Hard-wire into it an “instinct” to survive, and well… who knows where this can go. But we aren’t too different from that construction, don’t you think?

I will leave this here, to not enter that debate, but please exchange with me about it!

Let’s drop it here with the clear, research-based proof that we are no longer just building tools to help us write emails, summarize a thing, write code, or edit or generate photos. We are building digital mirrors of the universe. And we are watching, in real-time, as silicon and code independently discover the inner workings of the world we live in.

References

To write this post I went in detail through these two super-interesting works: a study presented as a preprint in 2024, and a more recent review about knowledge mechanisms in LLMs:

The Platonic Representation Hypothesis: https://arxiv.org/html/2405.07987v5

Knowledge Mechanisms in Large Language Models: A Survey and Perspective: https://aclanthology.org/2024.findings-emnlp.416/