ChatGPT now lets you nominate a Trusted Contact who gets alerted if your interaction with AI ‘indicates a serious safety concern’

- ChatGPT is introducing a new Trusted Contact feature

- Your contact gets alerted if the AI detects safety concerns

- The feature works on top of existing well-being features

We know that some people are having pretty intense conversations with AI chatbots, and ChatGPT developer OpenAI has now added a new Trusted Contact feature that lets users nominate a trusted individual who will receive an alert if there’s a safety concern.

It’s been the case for a while now that if your conversations take a turn towards self-harm and suicide, ChatGPT will recognize this and direct you towards crisis support helplines or the emergency services to get some help.

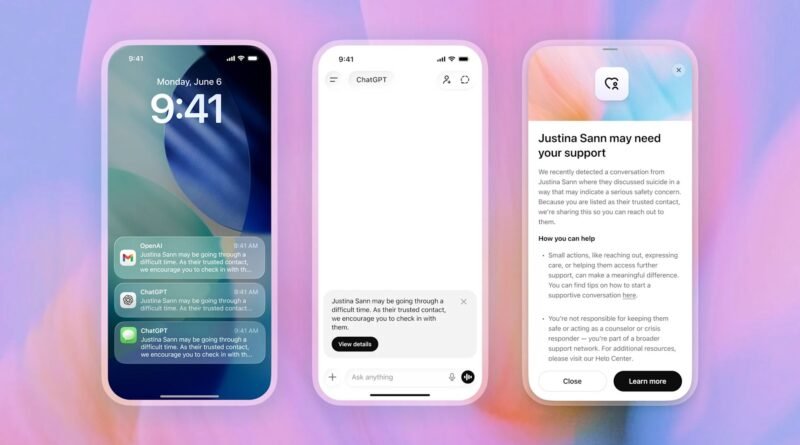

The Trusted Contact feature works in addition to those protections: the friend or relative you’ve specified will get a message over text, email, and the ChatGPT app, saying that you might be in trouble and that the contact should check in with you.

“Expert guidance identifies social connection as one of the most important protective factors to reduce suicide risk,” says OpenAI. “Trusted Contact is designed to encourage connection with someone the user already trusts. It does not replace professional care or crisis services, and is one of several layers of safeguards to support people in distress.”

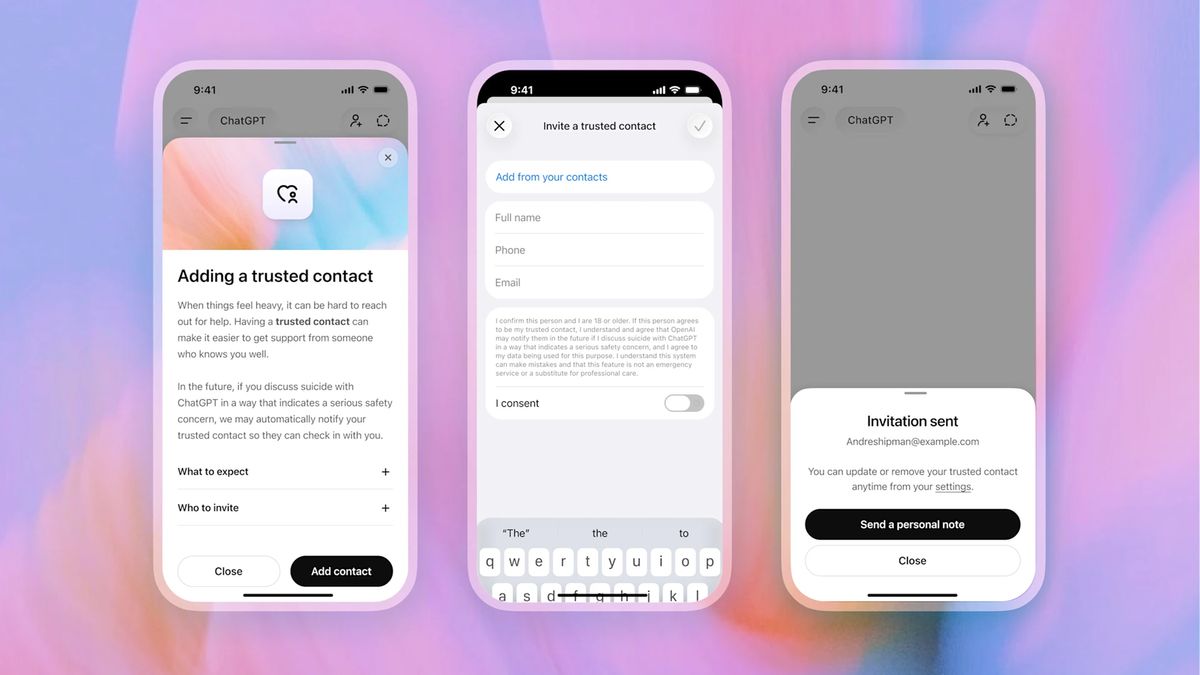

As the user who’s talking about self-harm or suicide, you’ll also get prompts to reach out to your nominated contact, with some AI-generated ideas for what to say. OpenAI says the feature has been developed in partnership with mental health professionals and experts, and works in a similar way to the existing parental controls — but for those 18 and over.

You can set your Trusted Contact through the ChatGPT settings panel, and it might just save your life. The person you specify has a week to accept the request; if they don’t, you can pick someone else.

Before a Trusted Contact gets alerted, “a small team of specially trained people” will review the chat within an hour. If that review confirms that there’s a serious safety concern, the contact gets pinged as described above.

The alert is “intentionally limited”, OpenAI says, and won’t include specifics from the chat itself. The feature is rolling out gradually from now, so if you don’t immediately see it in your account, it should show up soon.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

The best laptops for all budgets